Personal Experience for Writing Better Code

This post shares my thoughts and experiences on how to make your code better. The post is divided into two parts: The first half is about how to write better code yourself, mainly introducing some code principles, patterns and practices. The second half focuses on how to collaborate more effectively on coding.

Disclaimer: The views expressed here are purely based on my personal experiences and thoughts. Please regard them as for reference only. There could be inaccuracies or omissions in my content, so constructive feedback and corrections are welcome. Note that this post is originally written for scientific researchers.

The code examples are either written in Rust or in C++.

Write Better Code Yourself

Clean code is well-organized and follows a consistent coding style. This consistency makes it easier for other developers to read and understand the code. Clean code is also commented on, with clear and concise comments that explain what the code does and why it is needed. This makes it easier for other developers to understand the purpose of the code.

The progression from principles to patterns to practices represents a gradual move from abstract concepts to concrete code. Principles refer to high-level abstract ideas and philosophies. Patterns describe mid-level reusable solutions to commonly occurring software design problems. Practices represent specific, granular techniques and examples for coding.

As one moves down this progression, the level of abstraction decreases, the scope narrows, and the guidance becomes more explicit and targeted. Principles remain broad and open to interpretation, while practices are focused and prescriptive. Patterns sit in the middle, capturing the essential elements of a solution without an excessive amount of detail.

So in short, this progression outlines a constructive path for taking lofty principles and turning them into pragmatic, tangible code. The principles motivate and inspire, the patterns show structured solutions, and the practices demonstrate how to implement those solutions in a specific programming language or framework. Together they represent a helpful spectrum of software design guidance at varying levels of breadth and depth.

Principles

Are principles necessary? Yes. The idea behind principles is, a problem can be solved in multiple ways. These design principles provide rules to eliminate bad solutions and pick a good one. They are not truths but just a set of high-level guidelines that help ensure clean, simple and readable code.

CRISP

We all want to write clean code. But what is clean code? CRISP code might serve as a good approximation to clean code.

CRISP stands for Correct, Readable, Idiomatic, Simple, and Performant.

- Correctness is the prime quality. It might seem obvious and not worth saying, but it still needs to be emphasized, since we need to make every effort to ensure the correctness.

- Readability guarantees the quality the efficiency when in collaboration.

- Idiomatic, i.e. conventional. Conventions are useful. Picking the right names, organizing code in a logical fashion, having one idea per line of code: all these go towards cumulatively making your code readable and pleasant to work with.

- Simplicity. One aspect of simplicity is directness: it does what it says on the tin. It doesn't have weird and unexpected side effects, or conflate several unrelated things. Do not create new abstractions to no purpose other than avoiding repetition. Another aspect is frugality: Doing a lot with a little. Do not afraid of long functions.

- Performance. Don't waste your time to do useless optimizations. Bench it if you thinks it is slow and find the cause to optimize.

High cohesion, Loose coupling

High cohesion and loose coupling are software design principles that promote encapsulation and modularity.

High cohesion means that the functions and elements within a module or component are strongly related and focused. They work together to achieve a single, well-defined purpose. This makes the module coherent and its logic easier to understand and maintain.

Loose coupling means that a module or component does not have strong dependence on other modules or components. Its logic and functionality are self-contained. Changes in one module do not require modifications in the other module. This allows modules to evolve independently and reduces the risk of unintended side effects.

The benefits of high cohesion and loose coupling are:

- Easier to understand: Modules have a clear, single-minded purpose. Their interactions with other modules are minimal. This makes the overall design simpler to comprehend.

- More flexible: Loosely coupled modules can be modified, replaced or removed without significant rework of other modules. This makes the system adaptable to new requirements and technologies.

- More resilient: Bugs or changes are isolated within modules and do not propagate widely. This minimizes regression risk and unexpected side effects.

- Reusable: Highly cohesive and loosely coupled modules often serve as reusable, stand-alone components in other systems. They encapsulate a complete set of functionality for a given task or use case.

- Independent development: When functionality is properly partitioned into modules, teams can work on different modules simultaneously and in parallel. This allows faster iteration and delivery of new features or updates.

- Fault isolation: Errors and faults within a module do not easily contaminate other modules. This makes the system more robust, secure, and resilient to failure.

In summary, high cohesion and loose coupling are guiding principles for designing software that is understandable, flexible, reusable, and fault tolerant. They help structure a system into modules that are autonomous yet work together harmoniously.

SOLID

SOLID is a set of five design principles. These principles help software developers design robust, testable, extensible, and maintainable object-oriented software systems.

- SRP. The Single Responsibility Principle states that every module or class should have responsibility over a single part of the functionality provided by the software, and that responsibility should be entirely encapsulated by the class. For example, a class that shows the name of an animal should not be the same class that displays the kind of sound it makes and how it feeds.

"A class should have only one reason to change" - Robert C. Martin

// Good - parse_args has only one responsibility (parsing args)

fn parse_args(args: &[String]) -> Args {

/* ... */

}

fn do_something(args: Args) {

/* ... */

}

// Bad - do_something has two reasons to change (doing something and parsing args)

fn do_something() {

let args = parse_args(&std::env::args());

/* ... */

}

- OCP. The Open-Closed Principle states that classes, modules, and functions should be open for extension but closed for modification. It means you should be able to extend the functionality of a class, module, or function by adding more code without modifying the existing code.

"I am the one who knocks!" - Walter White

trait Shape {

fn area(&self) -> f64;

}

struct Circle { /* ... */ }

impl Shape for Circle {

fn area(&self) -> f64 { /* ... */ }

}

struct Square { /* ... */ }

impl Shape for Square {

fn area(&self) -> f64 { /* ... */ }

}

fn total_area(shapes: &[&dyn Shape]) -> f64 {

shapes.iter().map(|s| s.area()).sum()

}

Here we can add new shapes without modifying total_area.

- LSP. The Liskov Substitution Principle is one of the most important principles OOP. It states that child classes or subclasses must be substitutable for their parent classes or super classes. In other words, the child class must be able to replace the parent class without altering the correctness. This has the advantage of letting you know what to expect from your code.

To better illustrate it in OOP, we here use an example in C++:

class Rectangle {

public:

virtual void set_width(int w) { width = w; }

virtual void set_height(int h) { height = h; }

virtual int get_width() { return width; }

virtual int get_height() { return height; }

protected:

int width;

int height;

};

class Square : public Rectangle {

public:

virtual void set_width(int w) {

width = height = w;

}

virtual void set_height(int h) {

width = height = h;

}

};

void process(Rectangle& r) {

int w = r.get_width();

r.set_height(10);

// should be able to do this, but fails for Square!

assert(r.get_width() == w);

}

int main() {

Rectangle rec;

rec.set_width(5);

rec.set_height(10);

process(rec);

Square sq;

sq.set_width(5);

process(sq); // fails!

}

Here, Square violates the LSP because it cannot be substituted for Rectangle in the process() method. When we call set_height(10) on a Square, it also updates the width, breaking the assertion.

To fix this, Square should not redefine the set_width() and set_height() methods:

class Square : public Rectangle {

public:

virtual void set_side(int s) {

width = height = s;

}

};

// Now this works

int main() {

// ...

Square sq;

sq.set_side(5);

process(sq);

}

Now Square can be properly substituted for Rectangle.

But in Rust, there is something similar:

trait Drawable {

fn draw(&self);

}

struct Circle { /* ... */ }

impl Drawable for Circle {

fn draw(&self) { ... }

}

struct Square { /* ... */ }

impl Drawable for Square {

fn draw(&self) { ... }

}

fn draw_all(drawables: &[&dyn Drawable]) {

for d in drawables {

d.draw();

}

}

Here we can pass Circle or Square to draw_all and have the correct draw method called.

- ISP. The Interface Segregation Principle states that clients should not be forced to implement interfaces or methods they do not use. More specifically, the ISP suggests that software developers should break down large interfaces into smaller, more specific ones, so that clients only need to depend on the interfaces that are relevant to them. This can make the codebase easier to maintain.

This principle is fairly similar to the single responsibility principle (SRP). But it’s not just about a single interface doing only one thing – it’s about breaking the whole codebase into multiple interfaces or components.

trait Shape {

fn area(&self) -> f64;

}

trait Drawable {

fn draw(&self);

}

struct Circle { /* ... */ }

impl Shape for Circle { ... }

impl Drawable for Circle { ... }

struct Square { /* ... */ }

impl Shape for Square { ... }

impl Drawable for Square { ... }

// Good - separate traits for different concerns

fn total_area(shapes: &[&dyn Shape]) { ... }

fn draw_all(shapes: &[&dyn Drawable]) { ... }

// Bad - one big interface

trait ShapeAndDrawable {

fn area(&self) -> f64;

fn draw(&self);

}

- DIP. The Dependency Inversion Principle is about decoupling software modules. That is, making them as separate from one another as possible. It states that high-level modules should not depend on low-level modules. Instead, they should both depend on abstractions. Additionally, abstractions should not depend on details, but details should depend on abstractions. This makes it easier to change the lower-level code without having to change the higher-level code.

trait Logger {

fn log(&self, message: &str);

}

struct DbLogger { /* ... */ }

impl Logger for DbLogger { ... }

struct ConsoleLogger { /* ... */ }

impl Logger for ConsoleLogger { ... }

// High-level module depends on the abstraction

fn do_work(logger: &dyn Logger) {

...

logger.log("Did some work!");

}

fn main() {

let logger = DbLogger {};

do_work(&logger);

let logger = ConsoleLogger {};

do_work(&logger);

}

KISS

KISS stands for "Keep It Simple, Stupid". The core idea of the KISS principle is that simplicity should be a key goal in design, and unnecessary complexity should be avoided. Simple designs are more readable, testable, and maintainable. They have fewer bugs and edge cases. It means:

- Focusing on solving one specific problem at a time.

- Avoiding over-engineering or over-complicating designs.

- Using common sense and pragmatism in design decisions.

- Minimizing the number of components, code paths, algorithms, and data structures.

- Writing clean, concise, and well-structured code that is easy to understand.

- Hiding implementation details behind clear and minimal interfaces.

- Keeping a balanced and pragmatic mindset - simplicity is important but not at the cost of under-engineering.

In summary, the KISS philosophy aims for building software systems that are simple, minimal, pragmatic, and purposeful. It leads to designs that are easy to understand, debug, extend, and maintain.

YAGNI

'You Aren't Gonna Need It' suggests not adding extra features or optimizations that aren't essential based on current requirements. Most of the time, you can't accurately predict what will ultimately be needed down the road. For example, do not make encapsulation unless you find that code snippets are reusable in elsewhere of your codebase. In other words, first get something working, then make it optimal.

DRY

'Don't Repeat Yourself' is just a sophisticated way of saying don't copy and paste code. If you think you need to Ctrl+C/V, just think about creating a function for the code and use the function. But this does not necessary mean you should always make an abstraction to bundle or encapsulate your code. This principle aims to simplify your code and improve decoupling and reusability, but if you find it more straightforward to understand by copying and pasting, then by all means do so.

There is an interesting example. If we want to print number from 1 to 5, we could just write:

println!("This is number {}", 1);

println!("This is number {}", 2);

println!("This is number {}", 3);

println!("This is number {}", 4);

println!("This is number {}", 5);

And yes, it works and that is enough. We don't have to optimize it to have a more flexible design according to the YAGNI principle.

One day, we would like to print number from 1 to 100, can we just copy and paste to add 95 more lines? The answer is still yes. But this time, it would be natural for someone to stand up and say that we need a more efficient or even more flexible way to handle this situation. And this is the right time to optimize or refactor. So we DRY up the print, make it take an integer parameter, and run the whole thing in a loop:

for i in 1..=100 {

println!("This is number {}", i);

}

In this way, we also narrow the place we can make mistake. (You may miss some lines that should be changed when you copy and paste 100 lines)

SoC

'Separation of Concerns' is just tell you to separate a program into distinct sections, such that each section addresses a separate concern. SoC seems like the DRY's opposite cousin. It talks about detangling code so it can work in isolation. Imagine we have to database tables, one is service line the the other is card type. They have different names but same columns. Should we DRY them up? Obviously the answer is NO. They serve for different purpose.

It is interesting that we all parrot DRY and SoC as essential rules of how software is built. But when they butt heads we get stuck because we understand the words, not the idea.

Actually, they are both talking about 'concepts'. Despite superficial similarities of code, we should focus on the inner concepts. Are they talking about the same concepts? If yes, DRY. If no, Separate.

Observability

I think the most important part of observability is Logging/Tracing. Good logging helps you understand the flow of the program and track what is happening under the hood. It provides insights into the runtime behavior and state of the system that can be analyzed to troubleshoot issues, understand usage patterns, and improve performance. However, too much logging doesn’t help, since that obscures rather than clarifies.

Logs provide a historical record of discrete events that can be analyzed to establish operational baselines, identify patterns of usage, and debug software issues. Tracing on the other hand follows the flow of a request or transaction through the system end-to-end.

Having different levels of logging from DEBUG to ERROR will help gain visibility into the system without being overwhelmed with too much information. DEBUG level logs are very verbose and meant for debugging purposes. INFO level logs capture high-level milestones, metrics, and other general information about program flow. WARNING level logs highlight potential issues or unexpected behavior that does not necessarily disrupt system functionality. ERROR level logs capture system errors and exception that caused disruption in program flow. A good logging strategy utilizes these different log levels to gain optimal observability into a system.

Patterns

Patterns represent typical solutions to recurring challenges or template solutions to well-known problems that emerge during various stages of the software development lifecycle. Numerous resources catalog different pattern types:

- Analysis patterns

- Design patterns

- Integration patterns

- Architecture, API, Cloud and other kind of patterns

It's important to note that design patterns are not a one-size-fits-all solution, and developers should choose the appropriate pattern for each problem they are trying to solve.

Following are some patterns that I have found particularly fascinating and pragmatic in my experience.

Inheritance or Composition

Inheritance is about 'A-is-B' while composition is about 'A-has-B'. For example, 'Car is a engine' and 'Car has a engine and four tires'. Different situations should use different methods. Inheritance is not the only choice. In most cases, consider composition first.

There are a few reasons why composition is often better than inheritance:

- Loose coupling. Composition allows two classes to be loosely coupled, where a change in one class does not affect the other class. Inheritance tightly couples the superclass and subclass, so a change in the superclass can affect the subclass.

- Flexibility. Composition allows new components to be added or replaced easily. Inheritance is static and the hierarchy cannot be changed at runtime.

- Testability. Classes composed of smaller units are easier to test. Inheritance can make testing difficult as you need to test the subclass in context of the superclass.

- Single Responsibility Principle. Composition typically leads to classes with a single responsibility. Inheritance can violate this principle as subclasses may have multiple responsibilities.

- Dependency Inversion Principle. Composition typically depends on abstractions rather than concrete classes. This allows greater flexibility. Inheritance leads to tight dependencies between concrete classes.

Here's a C++ example that shows the benefits of composition over inheritance.

Inheritance example:

class Engine {

public:

virtual void start() = 0;

};

class GasEngine : public Engine {

public:

void start() override {

// start gas engine

}

};

class Car {

private:

Engine* engine;

public:

Car(Engine* engine) {

this->engine = engine;

}

void start() {

engine->start(); // tight coupling

}

};

int main() {

Car car(new GasEngine());

car.start();

}

Here we have:

- An

Enginebase class - A

GasEnginesubclass - A

Carclass that has anEngine - The

Caris tightly coupled to the concreteEngineclass

This is inflexible because:

- We can't change the

Engineimplementation without modifyingCar - We can't add new engine types without modifying

Car Cardepends on the concreteGasEngineclass rather than an abstraction.

Composition example:

class IEngine {

public:

virtual void start() = 0;

};

class GasEngine : public IEngine {

public:

void start() override {

// start gas engine

}

};

class ElectricEngine : public IEngine {

public:

void start() override {

// start electric engine

}

};

class Car {

private:

IEngine* engine;

public:

Car(IEngine* engine) {

this->engine = engine;

}

void start() {

engine->start(); // depends on abstraction

}

};

int main() {

Car gasCar(new GasEngine());

Car electricCar(new ElectricEngine());

gasCar.start(); // calls GasEngine::start()

electricCar.start(); // calls ElectricEngine::start()

}

Here we have:

- An

IEngineinterface as an abstraction GasEngineandElectricEngineimplementations ofIEngine- A

Carclass that holds anIEngine - The

Cardepends on the abstractionIEngine, allowing different concrete engines to be used interchangeably.

This is flexible because:

- We can add new engine types (e.g.

HybridEngine) without changingCar - We can pass any implementation of

IEnginetoCar Car's start method will call the correct method based on the concreteIEngineimplementation- Much looser coupling between classes

So composition provides a more flexible and extendable design by depending on abstractions rather than concrete types.

Another direct comparison is OOP with DOD (or its practice, ECS).

OOP stands for Object-Oriented Programming. DOD stands for Data-Oriented Programming. ECS stands for Entity-Component-System.

OOP is unpredictable since methods being overridden multiple times down the line. Additionally, OOP is slow due to dynamic dispatch, heap stored and dereference. While OOP helps us keep units together and decouple logic etc., it also creates its own problems. Often we end up with a huge chain of inheritances and references. When one thing needs to be changed, dozens break.

Also, our memory needs to be continuous. We can use the CPU cache efficiently if the memory is guaranteed to have specific data at certain positions. Yes, the process of designing our software in a data and cache-friendly way is called Data-Oriented-Design(DoD).

DOD tries to assemble data and functions in a way that allows the CPU to utilize its cache efficiently. We do not allow any logic on our data. Our data is merely a container that describes our objects. How that data is processed is up to other parts of the codebase.

Test-Driven Development (TDD)

Test-Driven Development (TDD) is a software development process that aims to ensure the quality of the software by running a set of test cases more frequently. The philosophy behind this approach is to first implement a set of tests for every new feature being added to the software. These tests are expected to fail as the new feature has not been implemented yet. Once it is implemented, all previous tests and the new ones should pass.

Describe what you would like the software to be before developing new features.

By doing this, you "ensure" that the new feature is correct and all previous ones were not affected by adding this new one. If you do this continuously, every new addition is guaranteed to compliance with your design.

Besides, tests are the best in-code documentation. When we evaluate if a third-party library is mature or well-documented enough to use, we will first check the documentation and then the tests. Tests can serve as examples of how to use the third party library. Comprehensive testing, on the one hand, can serve as documentation and use cases demonstrating how to use various aspects of the interface. On the other hand, it also shows that this library is not casual, but well maintained.

But always remember:

- Tests can only prove that the code does what the test writer thought it should, and most of the time they don't even prove that.

- A faulty or insufficient test is much worse than no test at all, because it looks like you have tests.

Clean Boundaries: Learning Tests

While we are not responsible for directly testing third-party libraries and frameworks, we should write tests that exercise our usage of them. These are known as learning tests. Learning tests allow us to validate that our understanding and integration of a third-party API is correct. They are essentially free of cost - the time spent writing them pays off by giving us confidence in and knowledge of the APIs we depend on.

In addition, learning tests serve as monitors that can detect if and when a third-party update introduces unintended behavior changes. By running the tests after each update, we can catch incompatible changes early and address them. The test code should mimic how we call into the third-party library from our production code.

For example, suppose we use a fake library called AcmeUploade to upload files. We might write learning tests like this:

use acme::AcmeUploader;

#[test]

fn test_upload_success() {

let mut uploader = AcmeUploader::new;

uploader.upload("test.txt");

assert_eq!(uploader.status(), "Uploaded");

}

#[test]

fn test_invalid_file() {

let mut uploader = AcmeUploader::new();

uploader.upload("nonexistent.txt");

assert_eq!(uploader.status(), "Error");

assert!(uploader.last_error().contains("nonexistent.txt not found"));

}

These tests exercise our usage of the AcmeUploader API and verify our assumptions about its behavior. If in a future release, uploader.last_error() instead returned "File not found" (without the filename), our tests would catch that and alert us to the subtle breakage before it caused issues in production.

Besides, when you have a bunch of third-party APIs to use together, it is recommended to bundle them together and wrap them in a modular. By creating such a shim layer between your code and third-party code, you can effectively reduce the coupling between your code and third-party code, so as to achieve flexible adjustments and changes and encapsulating details.

Adaptor Pattern

The Adapter Pattern allows the interface of an existing class to be used as another interface. It is often used when the interface of an existing class is not compatible with what the client code requires.

The Adapter Pattern consists of three main components:

- The Client: the code that uses the interface of the Target class.

- The Target: the interface that the Client code requires.

- The Adaptor: the class that adapts the interface of the Adaptee to the interface of the Target.

// Target interface that the client code requires

trait MediaPlayer {

fn play(&self, audio_type: &str, filename: &str);

}

// Adaptee interface that needs to be adapted to the Target interface

trait AdvancedMediaPlayer {

fn play_vlc(&self, filename: &str);

fn play_mp4(&self, filename: &str);

}

// Adaptee implementation that needs to be adapted to the Target interface

struct VlcPlayer;

impl AdvancedMediaPlayer for VlcPlayer {

fn play_vlc(&self, filename: &str) {

println!("Playing VLC file. Name: {}", filename);

}

fn play_mp4(&self, _filename: &str) {

// do nothing

}

}

// Adaptee implementation that needs to be adapted to the Target interface

struct Mp4Player;

impl AdvancedMediaPlayer for Mp4Player {

fn play_vlc(&self, _filename: &str) {

// do nothing

}

fn play_mp4(&self, filename: &str) {

println!("Playing MP4 file. Name: {}", filename);

}

}

// Adapter implementation that adapts the Adaptee interface to the Target interface

struct MediaAdapter {

advanced_media_player: Box<dyn AdvancedMediaPlayer>,

}

impl MediaAdapter {

fn new(audio_type: &str) -> Self {

match audio_type {

"vlc" => {

MediaAdapter {

advanced_media_player: Box::new(VlcPlayer),

}

}

"mp4" => {

MediaAdapter {

advanced_media_player: Box::new(Mp4Player),

}

}

_ => panic!("Unsupported audio type: {}", audio_type),

}

}

}

impl MediaPlayer for MediaAdapter {

fn play(&self, audio_type: &str, filename: &str) {

match audio_type {

"vlc" => self.advanced_media_player.play_vlc(filename),

"mp4" => self.advanced_media_player.play_mp4(filename),

_ => panic!("Unsupported audio type: {}", audio_type),

}

}

}

// Client code that uses the Target interface

struct AudioPlayer;

impl AudioPlayer {

fn play_audio(&self, audio_type: &str, filename: &str) {

match audio_type {

"mp3" => println!("Playing MP3 file. Name: {}", filename),

"vlc" | "mp4" => {

let media_adapter = MediaAdapter::new(audio_type);

media_adapter.play(audio_type, filename);

}

_ => panic!("Unsupported audio type: {}", audio_type),

}

}

}

fn main() {

let audio_player = AudioPlayer;

audio_player.play_audio("mp3", "song.mp3");

audio_player.play_audio("vlc", "movie.vlc");

audio_player.play_audio("mp4", "movie.mp4");

}

In this example, we have a Target interface MediaPlayer that the client code requires. We also have an Adaptee interface AdvancedMediaPlayer that needs to be adapted to the Target interface. We have two Adaptee implementations, VlcPlayer and Mp4Player, which implement the AdvancedMediaPlayer interface. We then have an Adapter implementation, MediaAdapter, that adapts the AdvancedMediaPlayer interface to the MediaPlayer interface. Finally, we have the client code AudioPlayer, which uses the MediaPlayer interface.

In the AudioPlayer implementation, when an audio type is not supported by the MediaPlayer, we create a new instance of MediaAdapter with the audio type and use the play method of the MediaPlayer interface to play the audio file.

As we can see, the AudioPlayer implementation is able to play all three audio file types (mp3, vlc, and mp4) using the MediaPlayer interface, thanks to the Adapter Pattern. The MediaAdapter implementation adapts the AdvancedMediaPlayer interface to the MediaPlayer interface, allowing the client code to use the same interface for all audio file types.

Factory Pattern

At times, using constructors to instantiate objects is not optimal. Constructors are tightly coupled to their classes and must share the class name, limiting descriptive power. Passing multiple parameters to constructors can also be confusing, and adding new required parameters in the future may break existing code.

Rather than relying on constructors, we can use the Factory Pattern to create objects in a flexible manner. The Factory Pattern employs a separate method to handle object creation. This keeps the object creation logic in one place, gives the method a descriptive name unrelated to the class name, accepts any number of intuitive arguments, and can easily accommodate new optional arguments without affecting existing code. Overall, the Factory Pattern can prove a more elegant solution than constructors for object instantiation.

Here is an example in C++:

class Car {

std::string model;

public:

Car(std::string model) {

this->model = model;

}

std::string getModel() {

return model;

}

};

class CarFactory {

public:

static Car createCar(std::string model) {

if (model == "Camry") {

return Car("Toyota Camry");

} else if (model == "Civic") {

return Car("Honda Civic");

} else {

return nullptr;

}

}

};

int main() {

Car* camry = CarFactory::createCar("Camry");

Car* civic = CarFactory::createCar("Civic");

std::cout << camry->getModel() << '\n'; // Toyota Camry

std::cout << civic->getModel() << '\n'; // Honda Civic

}

Also, there is an example in Rust:

enum EngineType {

Gas,

Electric,

}

trait Engine {

fn start(&self);

}

struct GasEngine { }

impl Engine for GasEngine {

fn start(&self) {

println!("Starting gas engine...");

}

}

struct ElectricEngine {}

impl Engine for ElectricEngine {

fn start(&self) {

println!("Starting electric engine...");

}

}

struct EngineFactory;

impl EngineFactory {

fn create_engine(engine_type: EngineType) -> Box<dyn Engine> {

match engine_type {

EngineType::Gas => Box::new(GasEngine {}),

EngineType::Electric => Box::new(ElectricEngine {})

}

}

}

fn main() {

let gas_engine = EngineFactory::create_engine(EngineType::Gas);

let electric_engine = EngineFactory::create_engine(EngineType::Electric);

gas_engine.start();

electric_engine.start();

}

Strategy Pattern

The Strategy Pattern is a behavioral design pattern that allows you to define a family of algorithms, put each of them into a separate class, and make their objects interchangeable.

The basic idea is to have a "Strategy" and a "Context". The Strategy defines an interface common to all supported algorithms. Context uses this interface to call the algorithm defined by a ConcreteStrategy.

This allows you to switch algorithms dynamically without needing to modify the Context.

Here's an example in Rust:

trait CompressionStrategy {

fn compress(&self, data: &Vec<u8>) -> Vec<u8>;

}

struct DeflateCompression;

impl CompressionStrategy for DeflateCompression {

fn compress(&self, data: &Vec<u8>) -> Vec<u8> {

// Deflate compression implementation...

}

}

struct LZMACompression;

impl CompressionStrategy for LZMACompression {

fn compress(&self, data: &Vec<u8>) -> Vec<u8> {

// LZMA compression implementation...

}

}

struct Compressor {

strategy: Box<dyn CompressionStrategy>

}

impl Compressor {

fn new(strategy: Box<dyn CompressionStrategy>) -> Compressor {

Compressor { strategy }

}

fn compress(&self, data: &Vec<u8>) -> Vec<u8> {

self.strategy.compress(data)

}

}

fn main() {

let mut compressor = Compressor::new(Box::new(DeflateCompression));

let compressed = compressor.compress(&vec![1, 2, 3]);

compressor = Compressor::new(Box::new(LZMACompression));

let compressed = compressor.compress(&vec![1, 2, 3]);

}

Here we have a Compressor context that uses a compression strategy. We have two compression strategy implementations, DeflateCompression and LZMACompression. The context is able to swap between these strategies at runtime and call the compress() method on the active strategy.

This allows us to easily change the compression algorithm used without needing to modify the context.

Dependency Injection

Dependency injection is a technique in which a class receives its dependencies from external sources rather than creating them itself. The benefits of dependency injection include increased flexibility and modularity in software design, improved testability, and reduced coupling between components. By decoupling objects from their dependencies, changes can be made more easily without affecting other parts of the system.

For example, let’s say we have a service class that depends on a database connection. If we create the database connection within the class, we create a tight coupling between the class and the database. This means that any changes to the database connection will require changes to the class, making the code less flexible and harder to maintain.

With dependency injection, we can pass the database connection to the class from outside, making the code more modular and easier to test. This also allows us to swap out the database connection with a different implementation, such as one that interacts with a different database or an external API, without having to modify the class itself.

A C++ example:

class Database {

public:

Database(string db_name) { ... }

};

class UserService {

public:

UserService(Database& db) { ... }

};

int main() {

Database db("my_database");

UserService service(db);

// Use service...

}

Also a rust example:

struct Database {

name: String

}

struct UserService {

db: Database

}

impl UserService {

fn new(db: Database) -> Self {

UserService { db }

}

}

fn main() {

let db = Database { name: "my_database".to_string() };

let service = UserService::new(db);

// Use service...

}

The UserService depends on Database but receives an instance through its constructor. The Database instance is not created by the UserService itself but is injected into it. This makes the UserService flexible (it can work with any Database implementation) and testable (we can pass a mock Database implementation).

Practices

RAII

RAII stands for Resource Acquisition Is Initialization. It is a programming technique that ties the lifetime of resources to the lifetime of the objects that own them. When an object goes out of scope, its destructor is called, which frees any owned resources.

For example in Rust:

{

let file = File::open("foo.txt");

// use the file

} // file goes out of scope, file is closed automatically

Here, the File object acquires the resource (the open file handle) during initialization. When the File goes out of scope, the destructor is called, closing the file handle and freeing the resource.

This ensures that resources are always properly cleaned up, even if there is an early return or panic.

This is in contrast to languages where you need to manually manage resource cleanup, like C:

FILE* f = fopen("foo.txt", "r");

// do some stuff

fclose(f); // manually close the file

If you forget to call fclose, the file handle will be leaked.

There are similar things to do in C++, e.g. you release resource in destructor.

In summary, RAII handles resource cleanup in a scope-based, systematic manner. It reduces errors from forgetting to manually clean up resources and leads to cleaner, safer code.

Refactor

Code refactoring is the process of improving existing code without changing its external functionality. It enhances the readability, maintainability, scalability, performance, and productivity of the codebase.

Refactoring should be integrated into the daily development process. It is best done constantly in small increments. It is a good idea to allocate specific time for refactoring and break down larger refactoring tasks into smaller manageable ones. Involving the whole team in the refactoring process leads to the best results.

When implementing something in a method, it helps a lot to spend extra time refactoring just that method. However, that does not mean the whole class or codebase needs to be refactored at once. Refactoring in small steps is the key.

Here we are going to talk about the levels of refactoring.

- Extract Method/Extract Class

I believe most of software is initially written to have clear steps to show the whole process. However, as time goes by, more code is added into the methods without adding new sub-methods as needed. The result is methods with hundreds of statements after each other, without structure. While still functional, it is now much harder to understand. It is difficult to see the bigger picture when you read this code for the first time.

To fix this, we reorganize the code by grouping related functionality together. Then, we extract these groups into separate, focused methods. The size of the extraction can vary, but each method should do one clear thing. Extraction is often iterative, hierarchical, and ongoing as you explore the code.

As you do this, also rename variables for clarity, simplify logic, and add relevant comments. Method extraction can continue throughout development. You don't need a comprehensive understanding of the entire codebase to start—pick parts to reorganize and simplify piece by piece.

Similarly, extract classes to each be responsible for only one concept (Single Responsibility Principle). Break up large classes into focused, independent classes.

In summary, Extract Method and Extract Class are refactoring techniques to restructure messy code into clean, readable, and modular code through focused extractions and simplification. Start small, iterate, and watch the code become more understandable over time.

- Improve Code Reusability

Do you remember the DRY principle when I mention reusability?

Duplicated code can lead to several problems, including increased maintenance costs, difficulty in making changes to the codebase, and a higher risk of introducing bugs.

While refactoring your code, you have to look out for duplicate codes. When finding such code, one method to deal with this is converting such codes into a single reusable function/method.

When it comes to specific methods to improve reusability, the first thing we think of is the methods of extracting methods and extracting classes mentioned above. There are also other methods, such as encapsulating into entity classes, replacing inheritance with combination, combining inheritance with template method, and so on.

- Reduce Hard-Code for Maintenance

You should replace magic number with a symbolic constant.

Writing hardcoded numbers cause confusion for others as their purpose is not defined. And this may also cause error when you want to make some changes but forget to modify some places. Converting hard-coded values into a variable with a meaningful name will definitely help others to understand it and reduce the risk of making mistakes when changing the value.

- Enhance Extensibility

By enhancing the extensibility, we can make our system more easier to deal with future requirement changes, especially we want to iterate fast. The general idea is that we don't need to do too much extensibility design in advance, but only pre-design and implement the most likely requirement changes. When the requirement changes come, think about the possible further changes in the future and refactor the code.

One specific and core principle to guide extensibility is the Open-Closed Principle in SOLID principle. We Open for extension but Close for modification. In this way, we can satisfy new requirements while minimizing the impact on the original code and existing features. We certainly don't want to install the car doors at the cost of removing the wheels. The key point is to isolate the new code and original code. Refer to section SOLID for specific code example.

As for the specific extensibility design technologies, e.g., template method or AOP(Aspect Oriented Programming), we leave them for readers to explore.

- Decoupling

Decoupling can make modules more 'pluggable', making them easier to upgrade or change independently. Besides the aforementioned methods, we should leverage more design patterns to decouple our code.

When design modules, we should design a clean boundary, making the interfaces easy to understand and also easy to extend. We could wrap third-party codes in a thin shim-layer for better isolation and easier debugging. This is also the core idea of Adaptor Pattern. We can design a unified adapter interface which is stable and will not change with external system changes. The internal system only needs to know what this adapter interface looks like.

We can also leverage other patterns for better decoupling, e.g. Strategy Pattern to decouple methods.

- Layering

As our system refactoring gradually deepens, we should begin to consider refactoring from a higher perspective and from the perspective of system architecture. One important aspect to consider in this process is layering.

Layering is important in refactoring because it helps to reduce code complexity and increase maintainability. By organizing code into distinct layers, we can isolate functionality and reduce the number of dependencies between different parts of the codebase. This, in turn, makes it easier to modify and maintain the codebase over time.

There are many different ways to organize code into layers, and the specifics will depend on the needs and requirements of the system being refactored. However, a common approach is to organize code into three layers: User-Interactive, Core Logic, and Bare Metal.

The User-Interactive layer typically includes the user interfaces and any related components, is responsible for handling user input and displaying information to the user.

The Core Logic layer contains the core functionality of the system. It is responsible for implementing the algorithms that drive the system, and often includes services, models, and other domain-specific objects.

The Bare Metal layer is responsible for managing system resources. For example, retrieving and storing data from and to the database, or sending packets to the Internet.

By separating the code into these distinct layers, we can reduce the coupling between different parts of the system, and make it easier to maintain and extend over time.

Overall, layering is an important technique to consider when refactoring code, as it can help to improve the overall organization, maintainability, and extensibility of the codebase.

- DDD(Domain Driven Design)

Domain-Driven Design (DDD) is an approach to software development that emphasizes the importance of understanding the domain (i.e. the problem space) in which a software system operates, and designing the software in a way that closely reflects the language and concepts of that domain. This is the ultimate step in refactoring which heavily rely on domain-specific knowledge for a better software design.

Documentation

Code documentation is essential. It:

- Explains how code works and why it was built that way. This shared knowledge enables new developers to quickly understand the codebase.

- Reduces maintenance costs. Documentation serves as institutional knowledge that outlives individual developers. Without it, projects become difficult to sustain as teams change over time.

- Enables collaboration. Documentation ensures all team members have a common understanding of conventions, architecture, and design decisions. This makes it easier to work together productively.

- Allows for confident changes and extensions. Clear documentation gives developers the context they need to make modifications or add new features without introducing bugs or breaking existing functionality.

- Clarifies intent and purpose. Code alone does not adequately convey its goals and rationale. Documentation provides the "why" behind the code.

There are 6 tips to make a better documentation:

- Be Clear and Concise. Use simple and straightforward language, avoid jargon or technical terms. Examples or code snippets are also recommended.

- Use Meaningful Names.

- Provide Context. Document the purpose, functionality, and usage of the code, including any assumptions, limitations, or dependencies.

- Keep Documentation Updated.

- Use Consistent Formatting and Style.

- Document Edge Cases and Error Handling. This helps developers understand how the code behaves in different scenarios and how to handle errors or exceptions correctly. It also serves as a reference for troubleshooting and debugging.

Collaborate Effectively

Conventional Commit

The complete specification can be found at conventionalcommits.org

This is a popular commit practice. This is a specification for adding human and machine readable meaning to commit messages, originally AngularJS. The commit message should be structured as follows:

<type>[optional scope]: <description>

[optional body]

[optional footer(s)]

<type> is used to indicate the purpose of a commit in a project's version control history. Here's what each of them typically means:

- feat: The addition of a new feature.

- fix: The resolution of a bug or issue.

- refactor: Changes to the codebase that do not add new features or fix bugs, but improve the overall structure or readability of the code.

- docs: Changes to documentation files, such as user guides, README files, or API documentation.

- test: Changes or additions to automated tests, such as unit tests, integration tests, or end-to-end tests.

- build: Changes related to the build system or external dependencies, such as package updates, build tool configuration changes, or CI/CD pipeline updates.

- chore: Changes that do not affect the code, such as updating documentation, refactoring code comments, or making minor code changes that don't affect the functionality of the application.

- ci: Changes to the continuous integration (CI) configuration files or scripts.

- perf: Changes related to performance optimization, such as code refactoring to improve execution speed or reduce memory usage.

- revert: A commit that undoes a previous commit.

- style: Changes to the code that do not affect its functionality, but improve its readability or aesthetics, such as code formatting, indentation, or naming conventions.

A <scope> MUST consist of a noun describing a section of the codebase surrounded by parenthesis, e.g., fix(parser):

A <description> MUST immediately follow the colon and space after the type/scope prefix. The description is a short summary of the code changes, e.g., fix: array parsing issue when multiple spaces were contained in string.

A longer commit body MAY be provided after the short description, providing additional contextual information about the code changes. The body MUST begin one blank line after the description.

A modified example from conventionalcommits.org:

fix(api): prevent racing of requests

Introduce a request id and a reference to latest request. Dismiss

incoming responses other than from latest request.

Remove timeouts which were used to mitigate the racing issue but are

obsolete now.

Reviewed-by: Z

Refs: #123

PR

Pull Request <= PR => Peer Review

It is easy to lose track of what is important when submitting and reviewing code. I will discuss some of my experience of where where to focus to improve the collaboration process.

PR, often seen as the abbreviation of Pull Request, it gives you a way to inform other developers that you created a new branch, corresponding to a new version of your source code. Others can then see what are the differences and comment on them, eventually approving or declining the merge of your changes into the mainline.

"Two heads are better than one."

The theory behind this is that by giving chance to others to look at your proposed changes, they can spot errors you missed before they go into production.

In fact, PR also means the process of Peer Review. It also serves as a knowledge-sharing mechanism for other team members. Given the aforementioned complex landscape software development is, and the often adoption of agile/scrum, you can’t afford to have silos where only a single individual is capable of understanding your code.

A common scenario of PR is like this:

John is a developer and he worked for a full week adding a new feature to our product. He creates the PR request with 55 files and no one reviews it for a couple of days.

After the third time asking for help, he either receives one of the two types of feedback:

- 3 comments on the choice of variable names, a suggestion to use

forEachinstead of for loop and an LGTM at the end.- A single comment describing how the feature/integration/abstraction is not correct and requires a major rewrite.

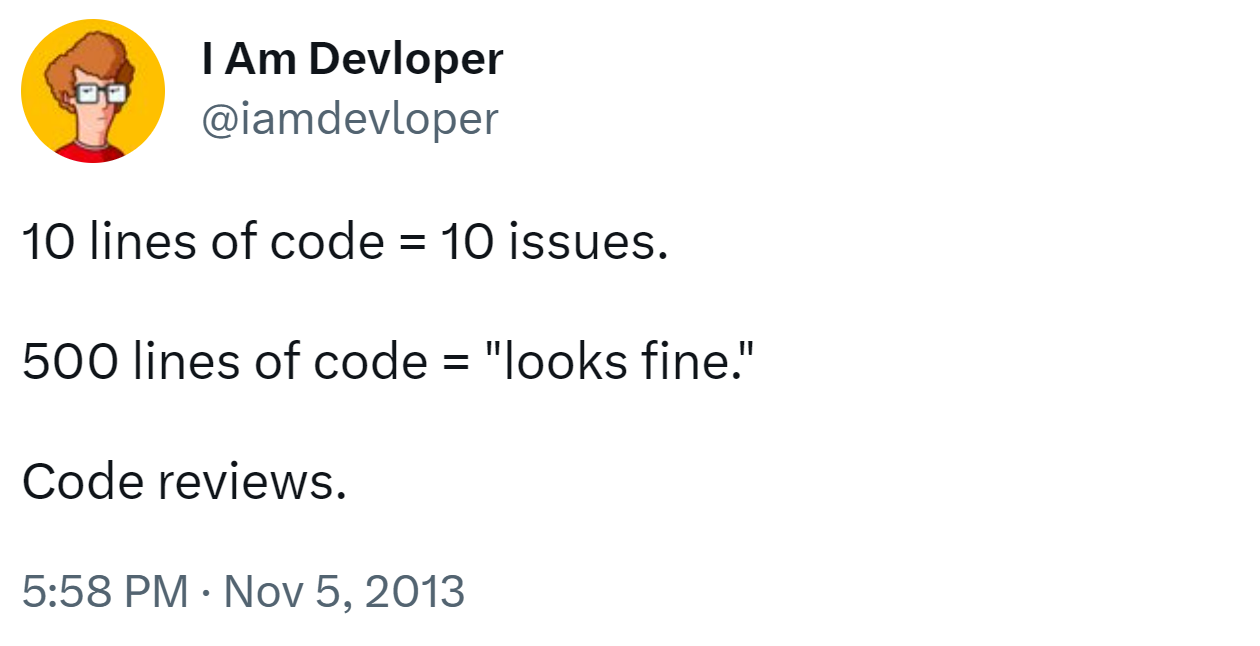

A famous joke summarizes as:

Let’s dissect the scenario and look at the potential issues and their consequences.

- "Worked for a full week"

This is too long to get feedback. A lot can change or go wrong before anyone can take a look. This is why draft PR is welcomed with a checklist to track your changes and get feedback frequently.

- *"Creates the PR request with 55 files"

PR should be concentrated. It should focus on only one thing, better to contain only several commits. A small PR is better for reviewers to catch up with and understand. If the scope of the changes is too big for the reviewer to understand, he is tempted to switch the focus from the correctness of the solution concerning the requirements to the code style.

Several studies are also showing that it's harder to find bugs when reviewing a lot of code.

A study of a Cisco Systems Programming Team revealed that a review of 200–400 LOC over 60 to 90 minutes should yield 70–90% defect discovery. A post from smallbusinessprogramming shows that pull requests with more than 250 lines of changes tend to take more than 1 hour to be reviewed. With this number in mind, a good pull request should not have more than 250 lines of code changed.

Hence, we should break down large pull request. We should break a big feature into small pieces that make sense and can be merged into the codebase piece by piece without breaking anything.

- "No one reviews it for a couple of days"

The reviewer should begin the review process earlier even if he/she doesn't have time to review the whole changes.

As we can see, there are issues on both sides, from those sending the PR and those performing the review. Let’s discuss how to improve.

Before we talk about the specific actions to take, we should know what we are expecting from a good review process. For me, there are mainly two:

- Reduce the number of bugs introduced to the mainline

- Reduce the accidental technical debt accrued

Review Process

We first introduce the common code review process from the paper in ICSE-SEIP '18: Modern Code Review: A Case Study at Google.:

- Creating: Authors start modifying, adding, or deleting some code; once ready, they create a change.

- Previewing: Authors should view the diff of the change and view the results of automatic code analyzers. When they are ready, authors "mail" the change out to one or more reviewers.

- Commenting: Reviewers view the diff in the web UI, drafting comments as they go. Program analysis results, if present, are also visible to reviewers. Unresolved comments represent action items for the change author to definitely address. Resolved comments include optional or informational comments that may not require any action by a change author.

- Addressing Feedback: The author now addresses comments, either by updating the change or by replying to comments. When a change has been updated, the author uploads a new snapshot. The author and reviewers can look at diffs between any pairs of snapshots to see what changed.

- Approving: Once all comments have been addressed, the reviewers approve the change and mark it ‘LGTM’ (Looks Good To Me). To eventually commit a change, a developer typically must have approval from at least one reviewer.

Peer Review (Reviewer)

Here are some tips for reviewers:

- Focus review on clarity and correctness first, then standards

To review a PR, first evaluate if it appropriately solves the intended problem. This is difficult to separate from the clarity of the solution, including clearly named variables, functions, classes, and logical execution paths.

Once you comprehend the solution and its correctness, which can be aided by examining the tests, focus on adherence to standards. Standards here refer primarily to the established coding and architecture practices of your team or domain, rather than superficial style conventions. With an unclear or incorrect solution, standards compliance means little. But when the approach is sound, bringing it in line with accepted practices helps maintain project consistency and quality over the long run.

- Fundament your feedback

The PR is a bi-directional learning opportunity. If you will give positive or negative feedback, try to provide some explanation that gives more background to the author to understand why you are asking is (potentially) relevant.

Here is an example:

Bad Review: "This code is bad. Why are you doing a linear search?"

Good Review: "This code block could be optimized by using a binary search instead of a linear one. Applied this would improve performance when searching large data sets."

In the bad review, there is criticism without proposing alternatives or why the code needs to be improved. On the other hand, the good one offers an optimization, explaining why that different approach can improve the function.

- Don’t be afraid to ask questions

If you do not understand enough about the context/code, you may be tempted to keep the questions to yourself and focus on the easy stuff (an important, but naming suggestion change). Do not hold back, if you respectfully present your question, it will only signal that the thing that was so obvious to the author, is not so much!

Pull Request (Author)

Here are some notes for authors:

- Keep your changes concentrated and small

There is no perfect number of files or lines of code as languages and context will largely differ, but the idea is to break down any change into smaller, self-contained pieces of functionality.

- Review your code before submitting

Focus on something else first and then re-read your code after a quick break to change your mindset. Overview all your changes to see if there are any mistakes.

- A well-organized PR description

If you are submitting a pull request, especially to open-source community, please always read the contributing guidelines first. It will describe how to develop, how to submit, what should be checked and etc. Although the exact guidelines are different among different repositories, there are some general guidelines to keep in mind:

- A Clear Title. The title is much more like how you write a conventional commit but summarizes what you have done among all your commits. It should give an indication of the issue, the changes made, and the type of PR.

- A Detailed Description. A comprehensive description should provide any relevant context or background information and technique details. For example, why do you submit this pull request? What issue have you solved? Will your PR introduce other potential risks or effects (such as performance degradation)? How can this be improved in the future? Detailed historical records of changes on technique decisions or other design choices will facilitate collaboration, help with future reference (e.g. maintenance and debugging) and most importantly, save time on understanding.

- Always review your changes first. Make sure you submit correct changes.

- Provide references. If you are submitting to open-source repositories, this is often the issues related to the PR. If you are working on research projects, this is often the materials help you make some decisions.

If you want to learn more about modern code reviews in large companies, I recommend you to read this paper in ICSE-SEIP '18: Modern Code Review: A Case Study at Google.

Conclusion

Hope my personal experience will help you to write better code and make collaboration more effectively.